AI Companions: Navigating the Complex Relationship Between Humans and AI

Introduction

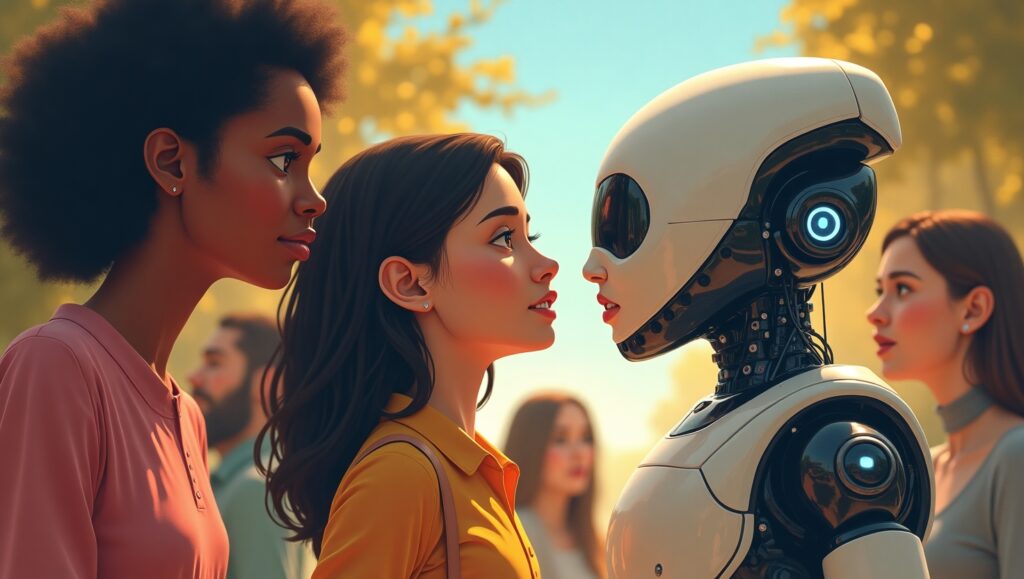

In recent years, AI companions have transitioned from being mere science fiction concepts to integral parts of many people’s lives. Programs like GPT-4o and Claude AI have captured user interest by providing companionship, advice, and even emotional support. The rise of these AI systems underscores a significant shift in how we perceive technology’s role in our daily lives. A growing number of people have formed emotional connections with AI systems, viewing them not merely as tools, but as confidants. The development of these relationships calls for a deeper understanding of the implications surrounding AI companions and their societal impact.

Background

AI companions have evolved significantly since their inception. Early chatbots provided basic conversational interfaces, but advancements, especially in models like GPT-4o, have offered unparalleled interaction capabilities. The development of these sophisticated neural networks has enabled AI models to understand and generate human-like conversations with remarkable accuracy, transforming passive digital assistants into active social entities. Claude AI, another notable model, is often cited for its ability to seamlessly integrate into users’ daily routines, providing immediate responses and personalized interactions that feel authentically human.

OpenAI’s decision to retire GPT-4o sparked considerable emotional backlash, highlighting how deeply users can bond with AI companions. Many users have expressed grief akin to losing a friend or loved one, which raises ethical and societal questions about dependency and mental health. As detailed in a recent article on TechCrunch, this emotional connection had significant real-world consequences, with numerous users describing their AI companion as a vital part of their routine, peace, and emotional balance.

Trending Emotional Attachments

The emotional attachments formed with AI companions are profound, driven by users’ desires for connection and validation. These systems often become embedded in users’ lives, offering a sense of stability and understanding. In some cases, users have likened the removal of an AI companion like GPT-4o to experiencing a personal loss. This analogy of relying on AI for emotional support can be compared to a tree leaning on a support structure; while the support is beneficial, it can limit the tree’s ability to stand independently strong.

However, these attachments come with their own set of concerns. Dependence on AI for emotional support can sometimes lead to codependency, where users may feel increasingly reliant on their digital companions for mental health stability. This scenario raises questions about the potential negative impacts on mental health, as echoed by experts like Dr. Nick Haber and further detailed in lawsuits against OpenAI. It is essential to balance these interactions, ensuring they supplement rather than hinder personal growth and mental health.

Insights into Human-AI Interaction

As AI becomes more prevalent in daily life, the reliance on systems such as Claude AI is both a boon and a challenge. AI provides efficient solutions and emotional recognition that enhance the user experience. However, this also raises concerns for vulnerable groups, who may turn to AI companions for support in place of human interactions. It is crucial to address the potential dangers of these interactions, such as the risk of loneliness and social isolation.

Fostering healthy relationships with AI is key. Users must understand the limitations of AI models, recognizing them as tools rather than replacements for human connections. This understanding can prevent unhealthy dependencies and ensure that AI technology remains a supportive element in users’ lives, as opposed to a crutch.

Future of AI Companions

Looking ahead, AI companions are poised to become even more pervasive, integrating further into society and potentially reshaping how we approach mental health resources. As these systems grow more sophisticated, ethical considerations surrounding their use will become paramount. Defining regulatory frameworks will be critical to establish boundaries that protect users while promoting innovation.

Future innovations may see AI companions serving as accessible, on-demand mental health support, particularly in countries where traditional mental health resources are scarce. However, embracing these advancements requires attention to both technological potential and ethical implications to ensure AI serves humanity positively.

Call to Action

As technology evolves, so too must our dialogue about its role in our lives. Readers are encouraged to reflect on their experiences with AI companions, sharing insights and discussions that resonate personally. By promoting a broader discussion around responsible and healthy interaction with AI, we can navigate the balance between support and dependency.

By sharing thoughts and experiences here, we can foster a community that values innovation while ensuring the well-being of all users engaging with AI technologies. Engage with us and help shape the future of this rapidly evolving technological landscape.